by Robin Dost

Part 4 of 7 of building the Malwarebox Ecosystem

Website: https://iimql.malwarebox.eu

GitHub: https://github.com/MalwareboxEU/IIMQL

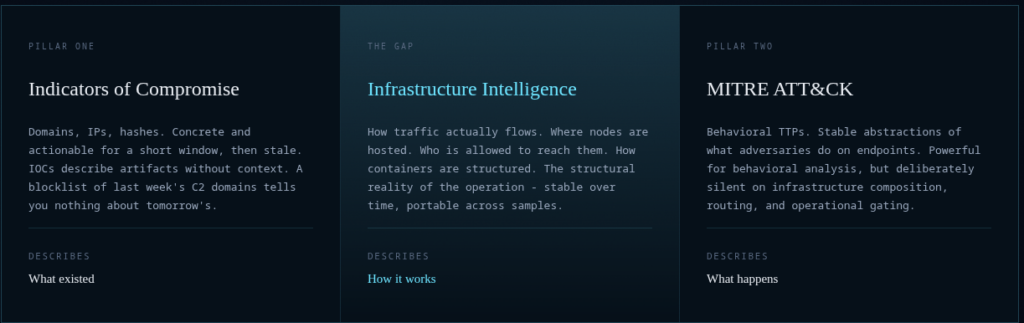

In the previous part, I introduced IIM, the Infrastructure Intelligence Model.

Click for the short version

IOCs tell you what existed

ATT&CK tells you what adversaries do on endpoints

IIM describes how adversary infrastructure is composed

Entry points, redirectors, staging hosts, payload locations, C2 endpoints, relations between them, techniques attached to infrastructure roles, patterns abstracted from concrete observations

Basically the stuff that is usually buried inside analyst prose, screenshots, PDF diagrams and “we saw this kind of redirect chain again” comments

IIM gives that layer a structureBut once you have a structure, the next obvious question is:

How do you search it?

Because describing one chain is nice.

Describing ten chains is useful.

Describing a few thousand chains and then manually scrolling through JSON like a threat intelligence raccoon in a dumpster is not a workflow.

That is where IIMQL comes in.

IIMQL is the query language for the Infrastructure Intelligence Model.

It is built to search, filter and correlate IIM chains, roles, entities, relations and structural patterns in a way that actually fits the model.

IIMQL is for questions like:

- Show me every chain where a staging artifact drops a payload that connects to a C2.

- Show me every actor using dynamic DNS in the C2 role.

- Show me every chain where the entry point flows into a redirector before reaching a payload.

- Show me every payload that connects to infrastructure annotated with a specific IIM technique.

- Show me every infrastructure chain that looks like this shape, even if every domain, IP and hash changed.

That last part is the whole point.

IOC feeds let you ask: Is this domain bad?

IIMQL lets you ask: Have I seen this operational shape before?

That is a very different question.

And frankly, it is the question we should have been asking more often.

IIMQL.

When you have thousands of chains, patterns, and actor profiles, yours plus federated feeds, the interesting questions are structural. IIMQL turns them into one-liners.

Why a query language?

IIMs whole pitch is that adversary infrastructure is structural.

- A campaign chain is not just a bag of domains, it is a directed graph.

- An entry point references or downloads a staging artifact.

- A staging artifact drops or executes a payload.

- The payload connects to a C2.

- A redirector may sit in between.

- A dead-drop resolver may point to the final endpoint.

- DNS may rotate.

- Hosting may rotate.

- The operator may burn the entire surface tomorrow and rebuild it with new artifacts.

The structure often survives.

And if the structure survives, you should be able to query it.

That sounds obvious, but in practice threat intelligence tooling often stops right before that point.

- We can store indicators.

- We can tag indicators.

- We can enrich indicators.

- We can export indicators.

- We can re-import the same indicators into another tool and pretend that interoperability happened.

But asking structural questions across infrastructure chains is still weirdly painful.

You either write custom Python, use a graph database directly, abuse a SIEM query language that was never designed for this, or convert the whole thing into a generic graph model and lose the IIM semantics on the way.

IIMQL avoids that.

- It is small on purpose.

- It speaks IIM directly.

- It does not try to become a general purpose graph query language.

IIMQL has one job:

Ask useful questions against IIM data.

The basic shape

Every IIMQL query follows a simple idea:

MATCH what you care about

WHERE the conditions are true

RETURN the fields you want backFor example:

MATCH chainThat returns chains.

MATCH chain WHERE actor_id = "MB-0001"That returns chains attributed to a specific actor ID.

MATCH position WHERE role = "c2"That returns positions where an entity plays the C2 role.

MATCH entity WHERE type = "domain" AND value =~ /duckdns/That returns domain entities matching a regex.

MATCH relation WHERE type = "drops"That returns relations where one artifact drops another.

Nothing exotic.

Nothing that requires a PhD in graph theory or three tabs of vendor documentation.

The interesting part starts when you query shapes.

Structural matching

IIM chains are directed graphs.

So IIMQL supports graph style matching for chain shapes.

Example:

MATCH (:entry)-->(:staging)-->(:payload)-->(:c2)That asks for chains where an entry position flows into staging, then payload, then C2.

You can make it stricter by requiring relation types:

MATCH (:entry)-[:download]->(:staging)-[:drops]->(:payload)-[:connect]->(:c2)Now the shape is not just role order.

It also requires a download relation, then a drops relation, then a connect relation.

That matters.

Because “entry to staging to payload to C2” is a useful broad shape.

But “entry downloads staging, staging drops payload, payload connects to C2” is much closer to an operational flow.

IIMQL lets you move between those levels without losing the model.

You can also use aliases:

MATCH (e:entry)-->(s:staging)

WHERE e.techniques HAS "IIM-T019"

RETURN chain.chain_id, s.entity.valueThis asks for chains where an entry position with a specific IIM technique flows into staging, then returns the chain ID and the staging entity value.

That is the kind of query I wanted IIMQL to make boring.

Because this should be boring.

Analysts should not need to write custom scripts for every question that is structurally obvious once the data is modeled.

Targets: chain, position, entity, relation and graph shapes

IIMQL can query different levels of the model.

MATCH chain is for top-level chain metadata.

Useful for actor IDs, confidence, observed timestamps, review flags, imported sources and general filtering.

MATCH position is for role assignments.

This is where you ask things like:

- Which entities acted as C2?

- Which positions carry IIM-T008?

- Which staging roles are still tentative?

- Which payload positions appear in confirmed chains?

MATCH entity is for raw artifacts.

Domains, IPs, URLs, files, hashes, emails, certificates, ASNs.

This is the closest IIMQL gets to a classic IOC workflow, but with one important difference:

Entities can still be returned with chain and position context.

A domain is not just a domain.

It may be a redirector in one chain and C2 in another.

That distinction matters.

MATCH relation is for edges.

Download, redirect, drops, execute, connect, resolves-to, references, communicates-with.

This lets you ask questions about how infrastructure pieces interact, not just what they are.

And graph patterns are for the good stuff.

The operational shapes.

The part that survives rotation.

Field filtering

IIMQL supports the basic operators you would expect.

Equality:

role = "c2"Inequality:

role != "entry"Ordering:

sequence_order > 2Regex:

value =~ /\.duckdns\.org$/Array membership:

techniques HAS "IIM-T008"Substring matching:

value CONTAINS ".example"Set membership:

confidence IN ["confirmed", "likely"]Boolean logic:

role = "c2" AND NOT needs_review = trueAgain, boring by design.

My point is not to be clever, my point is to be precise 🙂

Example: finding fast-flux or DGA C2

Let’s say you have a corpus of IIM chains and want to find C2 positions that carry either Fast-Flux DNS or DGA technique annotations.

In IIMQL, that becomes:

MATCH position

WHERE role = "c2"

AND (techniques HAS "IIM-T007" OR techniques HAS "IIM-T009")

RETURN chain.chain_id, chain.actor_id, entity.value, techniquesThat is the difference between “I have data” and “I can ask operational questions against my data”.

The query is not looking for a known bad IP.

It is looking for a role in a chain with specific infrastructure behavior.

That is a completely different analytical layer.

And it maps directly to IIM’s purpose.

IIM technique IDs describe infrastructure behavior, not endpoint behavior.

So when you query for IIM-T007 or IIM-T009, you are not asking “which malware family is this”, you are asking “which infrastructure role carries this property”.

That makes the result usable for clustering, detection engineering, reporting and pattern abstraction.

Example: finding a known operational tail

Another simple query:

MATCH (s:staging)-->(p:payload)-->(c:c2)

RETURN chain.chain_id, p.entity.value, c.entity.valueThis finds chains where staging flows into payload and payload flows into C2.’

That tail is common in real operations.

The concrete filenames, domains and IPs can all change.

The chain shape stays useful.

And if you want to be stricter:

MATCH (:staging)-->(:payload)-[:connect]->(:c2)Now the payload must connect to the C2 through a connect relation.

This is where IIMQL becomes more than a filter language:

It lets you treat adversary infrastructure like a structured system.

Not a spreadsheet, not a blocklist, not a pile of JSON.

A system.

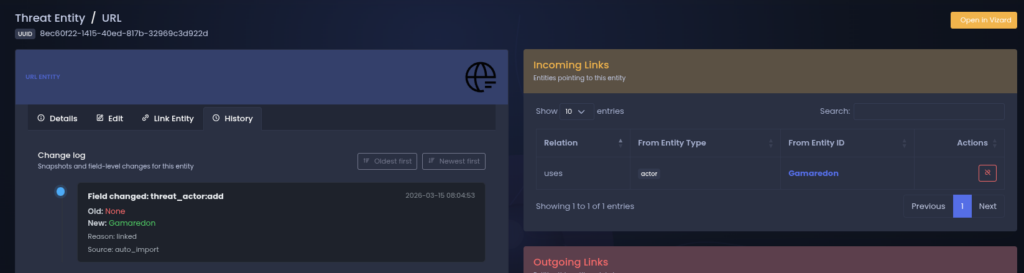

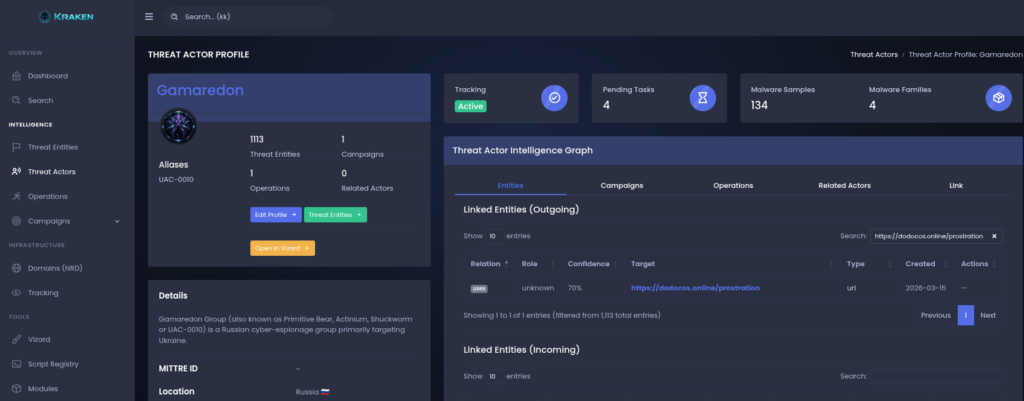

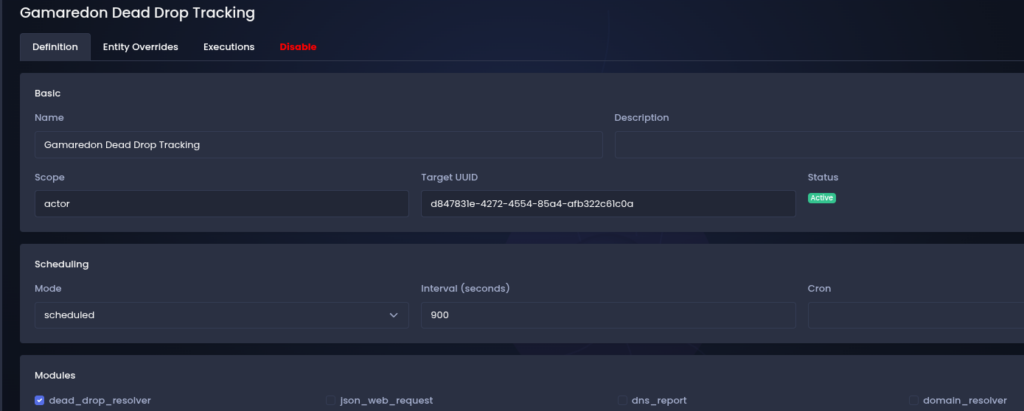

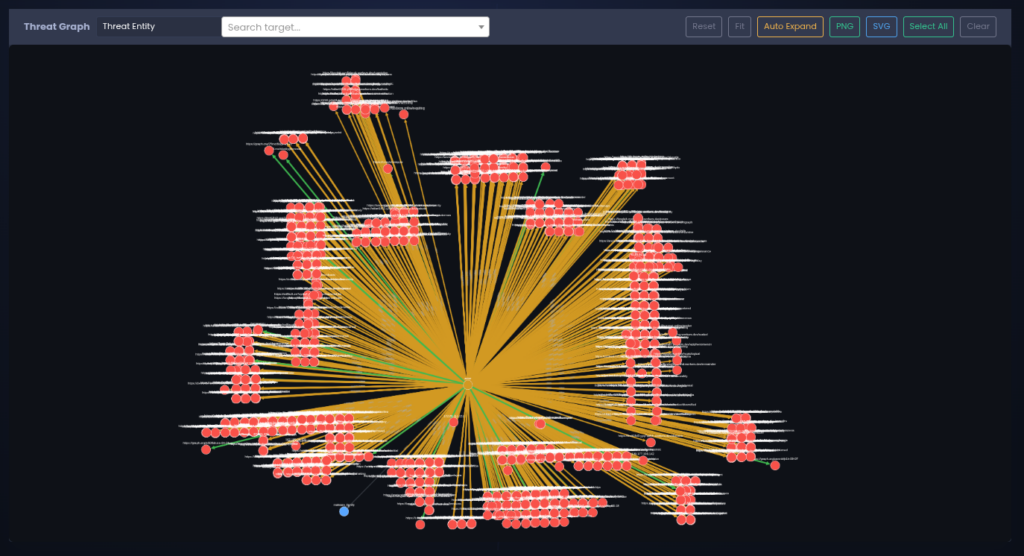

Why this matters for Malwarebox

IIMQL is part of the same larger idea as IIM, ACDP and Kraken.

- IIM gives adversary infrastructure a grammar.

- ACDP gives prioritization a methodology.

- Kraken is the working environment where actor infrastructure is tracked as a living graph.

- IIMQL is the thing that lets you ask questions across that graph without hardcoding the question into the platform.

You can publish patterns all day, but if another organization cannot match those patterns against their own chains, the value stays mostly theoretical.

IIMQL is the bridge between “we have a structural model” and “we can actually operationalize this”.

The long-term goal is a European CTI ecosystem that can track, model, query and share infrastructure intelligence without depending entirely on US vendor platforms, closed enrichment systems or PDF-based trust rituals from 2012.

But more about this in the next part of this series.

Federation needs queryability

In the IIM article I described the federation idea:

Org A observes a campaign.

Org A builds an IIM chain.

Org A abstracts it into a pattern.

Org B receives the pattern.

Org B matches it against their own observations.

The concrete domains, IPs and hashes may be completely different.

The structure still matches.

That is the whole “patterns instead of indicators” argument.

But for that to work at scale, you need queryability.

You need to be able to ask:

- Which of my chains match this shape?

- Which actor patterns overlap with my new observations?

- Which chains contain a redirector before payload delivery?

- Which C2 roles use the same infrastructure techniques across different campaigns?

- Which chains are confirmed and which are still tentative?

- Which entities are volatile artifacts and which structural roles keep reappearing?

Without a query layer, every participant in a federation has to reinvent the matching logic locally.

The query language is not just a convenience feature, it is part of making IIM usable as a shared analytical layer.

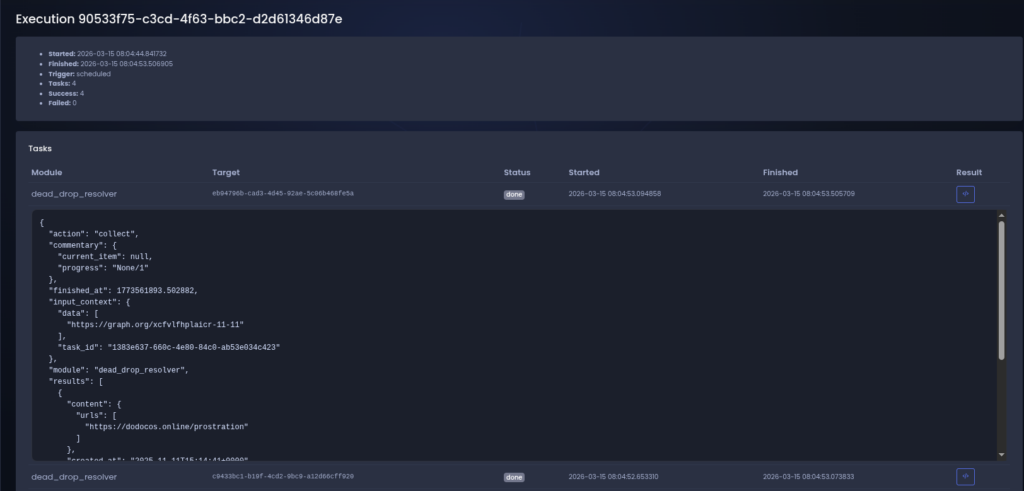

Local first, no runtime dependency circus

IIMQL is implemented in Python and currently has no runtime dependencies beyond the standard library.

That is intentional.

I do not want a query language for CTI data that needs half of PyPI, a Java service, an Elasticsearch cluster, a graph database and a deployment diagram that looks like a hostage situation.

You can install it locally:

git clone https://github.com/Mr128Bit/IIMQL

cd IIMQL

pip install -e .Then run queries from the CLI:

iimql 'MATCH position WHERE role = "c2"' examples/chains/You can query from a file:

iimql -f examples/05-fast-flux-c2.iimql examples/chains/You can return JSON:

iimql --format json 'MATCH entity WHERE type = "domain"' examples/chains/You can count matches:

iimql --count 'MATCH (:entry)-->(:payload)' examples/chains/You can pipe chains in:

cat examples/chains/*.json | iimql 'MATCH chain WHERE actor_id = "MB-0001"'And you can use it as a Python library:

from iimql import parse, execute, load_paths, query_chains

docs = load_paths(["examples/chains/"])

q = parse('MATCH position WHERE role = "c2" AND techniques HAS "IIM-T008"')

for row in execute(q, docs.chains):

print(row["chain"]["chain_id"], row["entity"]["value"])That makes it easy to drop into existing SOC tooling, research notebooks, enrichment scripts, internal CTI pipelines or whatever other questionable Python folder has been running in production since 2019.

No judgement ^^

What IIMQL is not

IIMQL is not a replacement for SQL.

If your data is relational, use SQL.

IIMQL is not a replacement for Cypher.

If you already have a graph database and want general graph traversal, Cypher is mature and extremely good at that.

IIMQL is not a replacement for STIX Patterning.

STIX Patterning is for matching cyber observable patterns in STIX data.

IIMQL is not a detection language like Sigma or YARA.

It does not match logs or files.

It matches IIM documents.

IIMQL is also not an extension of the IIM specification.

IIMQL consumes IIM data.

It does not define new roles.

It does not define new relation types.

It does not define new technique vocabularies.

The model stays the model & The query layer queries the model.

That separation matters because otherwise every tool starts quietly changing the standard it claims to implement.

And that is how “interoperability” becomes a PowerPoint word.

Current limitations

IIMQL is still early.

The current version is intentionally small and there are limits.

No variable-length paths yet.

So this:

MATCH (:entry)-->(:payload)means a direct edge.

It does not magically skip everything in between.

That is deliberate for the first cut because structural matching should be predictable before it becomes flexible.

No aggregation yet.

So no GROUP BY, COUNT by field, DISTINCT style reporting or sorting in the first implementation.

- No joins across chains yet.

- No

MATCH patternyet.

Patterns and feeds can be loaded, but queries currently run against chains.

Technique confidence and role confidence are readable, but there is no nice syntax sugar yet for questions like “show me chains where any position has tentative confidence”.

That will come later.

I would rather ship a small query language that behaves correctly than a big one that lies confidently.

Threat intelligence already has enough of that.

The design principle

The design principle behind IIMQL is simple:

Make structural infrastructure questions cheap.

- If an analyst sees a pattern in one campaign, they should be able to ask for that pattern across the corpus without writing a new parser.

- If a defender wants to find C2 roles using a specific infrastructure technique, that should be one query.

- If a researcher wants to compare actor tradecraft across rotations, they should not have to manually diff IOC lists.

- If a European public-sector team wants to exchange patterns without exposing victim-specific artifacts, they should be able to match those patterns locally against their own observations.

IIMQL is small, but it sits directly in that problem space.

It gives IIM data a usable query surface.

That is necessary if IIM is supposed to be more than a schema.

Why this belongs in the Malwarebox ecosystem

Malwarebox is slowly becoming a stack.

The direction is clear

The public frameworks stay open because they need review, adoption and criticism.

The private lab stays private where sensitive actor tracking and unfinished research can mature before it becomes public methodology.

That split is intentional.

Open frameworks need a place where mistakes are cheap.

Closed platforms need open interfaces if they are supposed to matter beyond one installation.

IIMQL is one of those interfaces.

It gives the open model a practical way to be used outside Kraken.

That matters because I do not want IIM to become “the format Kraken uses”.

That would be boring and useless.

IIM should be usable by researchers, SOC teams, CERTs, public-sector defenders, vendors, open-source projects and internal tooling.

IIMQL helps with that because it makes the data searchable without requiring the whole Malwarebox stack.

- Install the tool

- Load chains

- Run queries

- Break it

Tell me what is wrong.

That is a healthier path than pretending the first version is perfect because the logo looks official.

A small example of the bigger picture

Take a campaign where the concrete infrastructure rotates every few days.

- New domain

- New IP

- New payload hash

- New redirector

- Same operator logic

Classic IOC tracking sees change everywhere

IIM sees the chain:

entry

to staging

to payload

to dead-drop resolver

to dynamic DNS

to C2IIMQL lets you ask for that shape:

MATCH (:entry)-->(:staging)-->(:payload)-->(:redirector)-->(:c2)Or stricter:

MATCH (:entry)-[:download]->(:staging)-[:drops]->(:payload)-[:connect]->(:redirector)-[:resolves-to]->(:c2)Then you can filter:

WHERE chain.confidence IN ["confirmed", "likely"]And return what matters:

RETURN chain.chain_id, chain.actor_id, c.entity.valueThat is the difference.

You are no longer asking whether one artifact is bad.

You are asking whether an operation behaves like something you already understand.

That is where infrastructure intelligence becomes more than indicator management.

Where do I find chains to query?

You can create your own chains or watch my IIM Feed Repository.

There's already some chains and patterns in there, but i'll continue to upload more in the future.

If you have patterns & chains you want to contribute, feel free to reach out or create a push request.

License

IIMQL is published under the Apache License 2.0.

Keep the license notice and attribution intact.

- Build with it

- Ship with it

- Break it properly

Next

Part five will pull the ecosystem together.

Kraken, IIM, IIMQL, ACDP and the broader Malwarebox direction.

As one loop:

- Observe infrastructure

- Model the chain

- Query the structure

- Prioritize defensively

- Feed the lessons back into the model

That is the actual point of the ecosystem.

Not collecting more data.

Everyone has more data.

The point is making adversary infrastructure understandable, comparable and queryable in a way that survives rotation.

And if that can help push a more independent European CTI ecosystem into existence, even better.

For now:

- Read the IIM article

- Try IIMQL

- Run it against the examples

- Write ugly queries

- Find the weird edge cases

- Open issues

That is how this gets better.

| IIM Website | https://iim.malwarebox.eu |

| IIMQL Website | https://iimql.malwarebox.eu |

| PyPI | Coming soon |

| GitHub IIM | https://github.com/MalwareboxEU/IIM |

| GitHub IIMQL | https://github.com/MalwareboxEU/IIMQL |

| Malwarebox IIM Feed (Updated Weekly) | https://github.com/MalwareboxEU/IIM-Feed |